VASP-MGCM (solvation module)

go back to Main Page, Group Pages, Núria López and Group, Scripts_for_VASP

The VASP-MGCM (Vasp-Multigrid Continuum Model) available in tekla2 has been compiled in the VASP 5.3.3 version (normal and gamma version). To perform a calculation of a solvated system PLEASE read first the following pdf guide --> vasp_mgcm_guide.pdf. After that, the user needs to slightly modify the VASP running script. The two only lines that have to be changed are those corresponding to the module and executable calls. For some reason (most likely due to the different fortran libraries available in the tekla2 queues) the code runs at a reasonable computational cost in the c8m24.q. If there is enough space in this queue run your calculation there, otherwise use the c24m128ib.q although the speed is much lower as compared to that in the c8.24.q (at present).

- Example of a script to launch a VASP-MGCM calculation in the c8m24.q with the VASP 5.3.3 version (normal version):

#!/bin/bash # - Dr. Nuria Lopez' Group - ########################################## # SGE Parameters ########################################## #$ -S /bin/bash #$ -N example1 #$ -cwd #$ -masterq c8m24.q #$ -pe c8m24_mpi 8 #$ -m ae #$ -o o_$JOB_NAME.$JOB_ID #$ -e e_$JOB_NAME.$JOB_ID ########################################## # Load Evironment Variables ########################################## . /etc/profile.d/modules.sh module load vasp/5.3.3-mgarciar ########################################## # Running Job ########################################## export OMP_NUM_THREADS=1 echo $PWD >> o_$JOB_NAME.$JOB_ID echo $TMP >> o_$JOB_NAME.$JOB_ID mpirun -np $NSLOTS vasp_mgcm

- Example of a script to launch a VASP-MGCM calculation in the c8m24.q with the VASP 5.3.3 version (gamma-version):

#!/bin/bash # - Dr. Nuria Lopez' Group - ########################################## # SGE Parameters ########################################## #$ -S /bin/bash #$ -N example2 #$ -cwd #$ -masterq c8m24.q #$ -pe c8m24_mpi 8 #$ -m ae #$ -o o_$JOB_NAME.$JOB_ID #$ -e e_$JOB_NAME.$JOB_ID ########################################## # Load Evironment Variables ########################################## . /etc/profile.d/modules.sh module load vasp/5.3.3-mgarciar-GAMMA ########################################## # Running Job ########################################## export OMP_NUM_THREADS=1 echo $PWD >> o_$JOB_NAME.$JOB_ID echo $TMP >> o_$JOB_NAME.$JOB_ID mpirun -np $NSLOTS vasp_mgcm

For further details about the VASP-MGCM please read: J. Chem. Theory Comput., 2016, 12 (3), pp 1331–1341. If one has further questions send an e-mail to Dr. Miquel Garcia-Ratés (ask Nuria Vendrell for the e-mail address).

Yecheng's update[edit]

Yecheng made some changes to Miquel's code, removed the memory limit. This version can be run on Tekla and Marenostrum4 with good parallel efficiency (efficiency tests are shown below). No modifications were made to the calculation part of the code, so the procedure is the same as for Miquel's code. However, sometimes problems were encountered, ideas to solve them are listed below.

Tekla[edit]

In Tekla, the code can be found in the folder: /home/yzhou/software/vasp53mgcm_yc

You may either run it directly from there, adding export PATH=/home/yzhou/software/vasp53mgcm_yc:$PATH into your submission script, or copy the folder into your teklahome (e.g. making your own "software" folder).

The version you should use is vasp100lmto3 (see explanation below)

Further, you need to call certain modules

module load Intel_MKL/14.0.2.144 module load Intel_Parallel_XE_Cluster/2017 module load Intel_Compiler_Suite/14.0.2.144

Jobscript for Tekla:

#!/bin/bash # - Prof. Lopez Group - ########################################## # SGE Parameters ########################################## #$ -S /bin/bash #$ -N jobname #$ -masterq c24m128ib.q #$ -pe c24m128ib_mpi 24 #$ -cwd #$ -M yourmailr@iciq.es #$ -m ae #$ -o $JOB_ID.out #$ -e $JOB_ID.err ########################################## # Load Evironment Variables ########################################## . /etc/profile.d/modules.sh module load Intel_Parallel_XE_Cluster/2017 module load Intel_MKL/14.0.2.144 module load Intel_Compiler_Suite/14.0.2.144 export PATH=/home/yourhome/software/vasp53mgcm_yc:$PATH export OMP_NUM_THREADS=1 ###########RUN JOB######################## mpirun -np $NSLOTS vasp100lmto3

There are several versions of VASP compiled with different level(O0 and O3) of optimization. The higher opt level, i.e. vasp100lmto3 version usually runs faster. In one of my test, O3 version is about three times faster than O0 version; some other times the improvement may be very little.

If you encounter some strange errors, especially on C28 nodes, you may try the version without optimization(vasp*O0). (Most of the strange errors on C28 is have nothing to do with MGCM, the standard version (without solvent modification) also has the same error. For this kind of errors, you may fix them by setting NPAR=4,7, or remove it)).

Marenostrum3[edit]

In Marenostrum, the code can be found in the folder: /home/iciq72/iciq72749/soft/vasp53mgcm

Again, copy it to your home or run it from there as in the submission script below. Jobscript for Marenostrum3:

#!/bin/bash ############################# #####SBATCH --qos=debug #SBATCH -J jobname #SBATCH -n 48 #SBATCH -t 72:00:00 #SBATCH -o %x-%j.out #SBATCH -e %x-%j.err #SBATCH -D . ############################# #########environment######### module purge module load intel module load impi module load mkl export PATH=/home/iciq72/iciq72749/soft/vasp53mgcm:$PATH # alternatively put it in your own home directory and export path there export OMP_NUM_THREADS=1 ########run vasp################# mpirun vasp

MareNostrum4[edit]

#!/bin/bash

#SBATCH --qos=class_a

#SBATCH --time=23:59:00

#SBATCH --job-name=test

#SBATCH --cpus-per-task=1

#SBATCH --tasks-per-node=48

#SBATCH --ntasks=48

####### WARNING! Use ONLY 48 processors for mgcm (1 node).

#SBATCH --output=o_test.%j

#SBATCH --error=e_test.%j

module purge

module load intel

module load impi

module load mkl

export PATH=/gpfs/projects/iciq72/yecheng-mgcm/soft/vasp53mgcm:$PATH

srun /gpfs/projects/iciq72/yecheng-mgcm/soft/vasp53mgcm/vasp

Tips[edit]

- Do not optimise the structure with solvent switched on; the calculations were found to crash after several steps.

Instead, optimise when LSOLV = .FALSE. and then for the solvent calculation set:

# ionic convergence IBRION = 1 # !!! NSW = 1 # only one step!

!!! Do not set IBRION = -1

- Only inherit WAVECAR, never CHGCAR:

ISTART = 1

But never: ICHARG = 1

- Do not keep the CHGCAR in the same folder as the solvent calculation! Even if not read, it's mere existence causes the calculation to crash.

- If you still experience problems with the electronic convergence, you may first run the calculation in a dielectric medium with small dielectric constant, e.g.:

EPSINF = 5, 10, 20, 78.50

- Set:

LREAL = Auto

Allows VASP to choose between real and reciprocal space projections.

- Read carefully again the manual: vasp_mgcm_guide.pdf.

Tips (Rodrigo and Ranga)[edit]

Along with the tips suggested above, these are a few other things to try or keep in mind while doing solvent calculations using VASP-MGCM. These are especially useful to try when the calculations crash.

- Use ISMEAR = 0, since ISMEAR > 1 leads to negative electron occupancies which is nonphysical and might lead to calculations crashing.

- It is recommended to switch off the dipole corrections (LDIPOL = FALSE)

- Do not parallelize the job, run it on 1 node (c824_mpi 8)

- Switching off the symmetry tag: ISYM = 0

- Decreasing the vaccuum in the unit cell may also help with the convergence of calculations.

Finding the error[edit]

Often it requires a lot of 'trial-and-error' before you get your system to run. But there are some things to check in your output, which may give you a clue about what was going wrong:

- Check your error file (e-xxx, o-xxx files; slurm-xxx file in Marenostrum):

- Did the electronic optimisation start and crash after the first electronic step, the reason is likely that your WAVECAR was erroneous or not well converged.

- Did the electronic optimisation start and crash after the first NELMDL non self-consistent steps (default is 5), there is something wrong when adding the solvent. This may possible be solved with using a smaller EPSINF (not yet tried).

- Did the electronic optimisation not start at all, check if there is a memory-related error, such as 'insufficient virtual memory' or 'Out of memory'. This might be solved with reducing the cut-off energy.

Often this problem can be solved by using C24, instead of C28 or marenostrum nodes. At BSC or C28 several softwares are over-optimised, maybe the modules mkl or intel, which can lead to problems. C24 is generally more stable!

- Check the ERROR file: It gives you the relative error (ERROR RELATIU) when going step-wise from a large grid to small grid, as described in the MGCM algorithm. Usually the relative error should decrease, if it suddenly increases to a very high number, there might be an error in the grid partitioning, which have causes a division by zero. Check carefully that the grids in vacuum and in solvent are equal!

- Check the fort.71 file that gives you the grid partitions in real and reciprocal space. Check if they are the same for calcualations with and without solvent.

Benchmarks[edit]

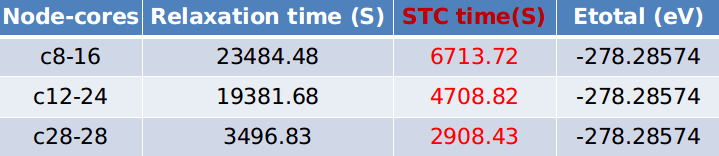

- Tekla:

Parallel test:

(Does anyone know how to make a table?):

Bigger model test: Warning: Models calculated with higher ENCUT energy show lower energies. This is not the problem of VASP-MGCM, VASP-SOL also has such a problem.

- Marenostrum

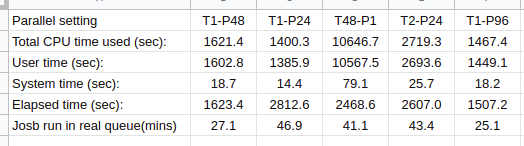

The first line is the parallel setting, "T2" means the number of thread per process is 2, and P24 means the number of processes is 24. The product of the thread number and the process number is the total CPU we used. "Total CPU time used (sec):" is the time for CPUs perform a calculation. There is no improvement at all. But the real used time, and also the time for BSC to calculate our load, is the time elapsed "Elapsed time (sec):". It is very weird that the used total CPU time has such a difference with the elapsed time.

If we define the efficiency of calculation on one node with 48 CPUs as 1, then the efficiency of the job running with 24 CPU is the highest. I remember BSC calculates the used CPU time based on the number of nodes we used. If we use 24 CPUs, the cost for us is the same for 48 CPUs.

As the efficiency of the jobs performed on 2 nodes with 96 CPUs is 0.54, there is no actual improvement. Therefore, I do not suggest you used this setting, besides large memory jobs.

I strongly suggest you use one node with 48 CPUs to perform MGCM calculations.