VASP-MGCM (solvation module)

go back to Main Page, Group Pages, Núria López and Group, Scripts_for_VASP

The VASP-MGCM (Vasp-Multigrid Continuum Model) available in tekla2 has been compiled in the VASP 5.3.3 version (normal and gamma version). To perform a calculation of a solvated system PLEASE read first the following pdf guide --> vasp_mgcm_guide.pdf. After that, the user needs to slightly modify the VASP running script. The two only lines that have to be changed are those corresponding to the module and executable calls. For some reason (most likely due to the different fortran libraries available in the tekla2 queues) the code runs at a reasonable computational cost in the c8m24.q. If there is enough space in this queue run your calculation there, otherwise use the c24m128ib.q although the speed is much lower as compared to that in the c8.24.q (at present).

- Example of a script to launch a VASP-MGCM calculation in the c8m24.q with the VASP 5.3.3 version (normal version):

#!/bin/bash # - Dr. Nuria Lopez Group - ########################################## # SGE Parameters ########################################## #$ -S /bin/bash #$ -N example1 #$ -cwd #$ -masterq c8m24.q #$ -pe c8m24_mpi 8 #$ -m ae #$ -o o_$JOB_NAME.$JOB_ID #$ -e e_$JOB_NAME.$JOB_ID ########################################## # Load Evironment Variables ########################################## . /etc/profile.d/modules.sh module load vasp/5.3.3-mgarciar ########################################## # Running Job ########################################## export OMP_NUM_THREADS=1 echo $PWD >> o_$JOB_NAME.$JOB_ID echo $TMP >> o_$JOB_NAME.$JOB_ID mpirun -np $NSLOTS vasp_mgcm

- Example of a script to launch a VASP-MGCM calculation in the c8m24.q with the VASP 5.3.3 version (gamma-version):

#!/bin/bash # - Dr. Nuria Lopez Group - ########################################## # SGE Parameters ########################################## #$ -S /bin/bash #$ -N example2 #$ -cwd #$ -masterq c8m24.q #$ -pe c8m24_mpi 8 #$ -m ae #$ -o o_$JOB_NAME.$JOB_ID #$ -e e_$JOB_NAME.$JOB_ID ########################################## # Load Evironment Variables ########################################## . /etc/profile.d/modules.sh module load vasp/5.3.3-mgarciar-GAMMA ########################################## # Running Job ########################################## export OMP_NUM_THREADS=1 echo $PWD >> o_$JOB_NAME.$JOB_ID echo $TMP >> o_$JOB_NAME.$JOB_ID mpirun -np $NSLOTS vasp_mgcm

For further details about the VASP-MGCM please read: J. Chem. Theory Comput., 2016, 12 (3), pp 1331–1341. If one has further questions send an e-mail to Dr. Miquel Garcia-Ratés (ask Nuria Vendrell for the e-mail address).

Yecheng's update

Editing......

I made some changes to Miquel's code, removed the memory limit. This version can be run on Tekla and Marenostrum4 with good parallel efficiency. But actually, I didn't modify the calculation part. All calculation procedures are the same as previous. Nothing special action needs to take. But one has to load the right environment. I attached the jobs scripts here:

-The script for Tekla:

#!/bin/bash # - Dra. Nuria's Lopez Group - ########################################## # SGE Parameters ########################################## #$ -N MGCM_YC #$ -masterq c28m128ib.q #$ -pe c28m128ib_mpi 28 #$ -cwd ###$ -M yzhou@iciq.es #$ -m bae touch $JOB_NAME.MACHINES.$JOB_ID cat $TMP/machines >> $JOB_NAME.MACHINES.$JOB_ID #$ -o $JOB_ID.out #$ -e $JOB_ID.err ########################################## # Load Evironment Variables ########################################## . /etc/profile.d/modules.sh module load Intel_MKL/14.0.2.144 module load Intel_Parallel_XE_Cluster/2017 module load Intel_Compiler_Suite/14.0.2.144 export PATH=/home/yzhou/software/vasp53mgcm_yc:$PATH export OMP_NUM_THREADS=1 ###########RUN JOB######################## mpirun -np $NSLOTS vasp100lmto3 # vasp100lmto0

There are several versions of VASP compiled with different level(O0 and O3) of optimization. The higher opt level version usually runs faster. vasp*O3 is optimized at the highest level. In one of my test, O3 version is about three times faster than O0 version. Some times the improvement may be very little. I strongly suggest you use the O3 version(vasp*O3).

If you encounter some strange errors, especially on C28 nodes, you may try the version without optimization(vasp*O0). (Most of the strange errors on C28 is nothing business with MGCM, the standard version(without solvent modification) also has the same error. For this kind of errors, you may fix them by setting NPAR=4,7, or remove it)).

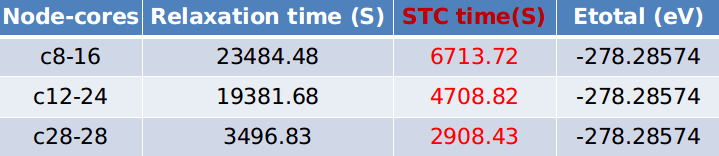

Benchmarks(Does anyone know how to make a table?):

Parallel test:

Bigger model test:

Warning: Models calculated with higher ENCUT energy show lower energies. This is not the problem of VASP-MGCM, VASP-SOL also has such a problem.

-The script for Marenostrum:

#!/bin/bash ############################# #####SBATCH --qos=debug #SBATCH -J test_mpi #SBATCH -n 48 #SBATCH -t 2:00:00 #SBATCH -o %x-%j.out #SBATCH -e %x-%j.err #SBATCH -D . ############################# #########environment######### module purge module load intel module load impi module load mkl export PATH=/home/iciq72/iciq72749/soft/vasp53mgcm:$PATH export OMP_NUM_THREADS=1 ########run vasp################# mpirun vaspo3

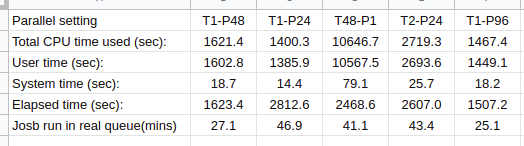

Here is the benchmark.

The first line is the parallel setting, "T2" means the number of thread per process is 2, and P24 means the number of processes is 24. The product of the thread number and the process number is the total CPU we used. "Total CPU time used (sec):" is the time for CPUs perform a calculation. There is no improvement at all. But the real used time, and also the time for BSC to calculate our load, is the time elapsed "Elapsed time (sec):". It is very weird that the used total CPU time has such a difference with the elapsed time.

If we define the efficiency of calculation on one node with 48 CPUs as 1, then the efficiency of the job running with 24 CPU is the highest. I remember BSC calculates the used CPU time based on the number of nodes we used. If we use 24 CPUs, the cost for us is the same for 48 CPUs.

As the efficiency of the jobs performed on 2 nodes with 96 CPUs is 0.54, there is no actual improvement. Therefore, I do not suggest you used this setting, besides large memory jobs.

I strongly suggest you use one node with 48 CPUs to perform MGCM calculations.